This is the audio of the tech support scammer call I tweeted about, and which will be an upcoming Ars article—posted here for science purposes.

Category Archives: Cyberdefense and Information Assurance

Big Brother on a budget: How Internet surveillance got so cheap

Deep packet inspection, petabyte-scale analytics create a “CCTV for networks.”

by Sean Gallagher – This feature originally ran on August 28, 2012 on Ars Technica

When Libyan rebels finally wrested control of the country last year away from its mercurial dictator, they discovered the Qaddafi regime had received an unusual gift from its allies: foreign firms had supplied technology that allowed security forces to track nearly all of the online activities of the country’s 100,000 Internet users. That technology, supplied by a subsidiary of the French IT firm Bull, used a technique called deep packet inspection (DPI) to capture e-mails, chat messages, and Web visits of Libyan citizens.

The fact that the Qaddafi regime was using deep packet inspection technology wasn’t surprising. Many governments have invested heavily in packet inspection and related technologies, which allow them to build a picture of what passes through their networks and what comes in from beyond their borders. The tools secure networks from attack—and help keep tabs on citizens.

Narus, a subsidiary of Boeing, supplies “cyber analytics” to a customer base largely made up of government agencies and network carriers. Neil Harrington, the company’s director of product management for cyber analytics, said that his company’s “enterprise” customers—agencies of the US government and large telecommunications companies—are ”more interested in what’s going on inside their networks” for security reasons. But some of Narus’ other customers, like Middle Eastern governments that own their nations’ connections to the global Internet or control the companies that provide them, “are more interested in what people are doing on Facebook and Twitter.”

Surveillance perfected? Not quite, because DPI imposes its own costs. While deep packet inspection systems can be set to watch for specific patterns or triggers within network traffic, each specific condition they watch for requires more computing power—and generates far more data. So much data can be collected that the DPI systems may not be able to process it all in real time, and pulling off mass surveillance has often required nation-state budgets.

Not anymore. Thanks in part to tech developed to power giant Web search engines like Google’s—analytics and storage systems that generally get stuck with the label “big data”—”big surveillance” is now within reach even of organizations like the Olympics.

Network security camera

The tech is already helping organizations fight the ever-rising threat of hacker attacks and malware. The organizers of the London Olympic games, in an effort to prevent hackers and terrorists from using the games’ information technology for their own ends, undertook one of the most sweeping cyber-surveillance efforts ever conducted privately. In addition to the thousands of surveillance cameras that cover London, there was a massive computer security effort in the Games’ Security Operation Centers, with systems monitoring everything from network infrastructure down to point-of-sale systems and electronic door locks.

“Almost everything interesting happening in networking has some DPI embedded in it. What gets people riled up a bit is the ‘inspection’ part, because somehow inspection has negative connotations.”

The logs from those systems generated petabytes of data before the torch was extinguished. They were processed in real-time by a security information and event management (SIEM) system using “big data” analytics to look for patterns that might indicate a threat—and triggering alarms swiftly when such a threat was found.

The combination of the sophisticated analytics and massive data storage in big data systems with DPI network security technology has created what Dr. Elan Amir, CEO of Bivio Networks, calls “a security camera for your network.”

“There’s no question that within the next three to five years, not having a copy of your network data will be as strange as not having a firewall,” Amir told me.

“The danger here,” Electronic Frontier Foundation Technology Projects Director Peter Eckersley told Ars, “is that these technologies, which were initially developed for the purpose of finding malware, will end up being repurposed as commercial surveillance technology. You start out checking for malware, but you end up tracking people.”

Unchecked, Eckersley said, companies or rogue employees of those companies will do just that. And they could retain data indefinitely, creating a whole new level of privacy risk.

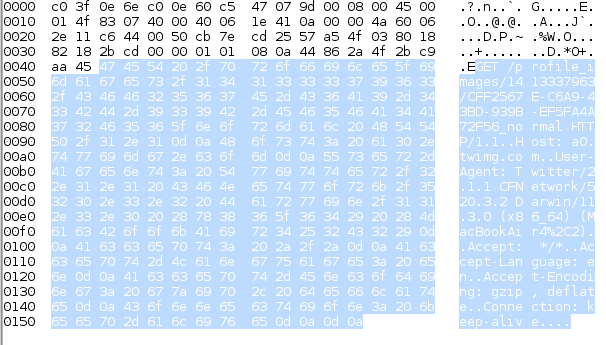

How deep packet inspection works

As we send e-mails, search the Web, and post messages and comments to blogs, we leave a digital trail. At each point where Internet communications are received and routed toward their ultimate destination, and at each server they touch, security and systems operations tools give every transactional conversation anything from a passing frisk to the equivalent of a full strip search. It all depends on the tools used and how they’re set up.

One of the key technologies that drives these tools is deep packet inspection. A capability rather than a tool itself, DPI is built into firewalls and other network devices. Deep packet inspection and packet capture technologies revolutionized network surveillance over the last decade by making it possible to grab information from network traffic in real time. DPI makes it possible for companies to put tight limits on what their employees (and, in some cases, customers) can do from within their networks. The technology can also log network traffic that matches rules set up on network security hardware— rules based on the network addresses that the traffic is going to, the type of traffic itself, or even keywords and patterns within its contents.

“Almost everything interesting happening in networking, especially with a slant toward cyber security, has some DPI embedded in it, even if people aren’t calling it that,” said Bivio’s Amir. “It’s a technology and a discipline that captures all of the processing and network activity that’s getting done on network traffic outside of the standard networking elements of packets—the addressing and routing fields. What gets people riled up a bit is the ‘inspection’ part, because somehow inspection has negative connotations.”

To understand how DPI works, you first have to understand how data travels across networks and the Internet. Regardless of whether they’re wired or wireless, Internet-connected networks generally use Internet Protocol (IP) to handle routing data between the computers and devices attached to them. IP sends data in chunks called packets—blocks of data proceeded by handling and addressing information that lets routers and other devices on the network know where the data came from and where it’s going. That addressing information is often referred to in the networking world as Layer 3 data, a reference to its definition within the Open Systems Interconnection network model.

The OSI Layers of an Internet data packet

| OSI LAYER | NAME | DESCRIPTION |

|---|---|---|

| Layer 1 | Physical | The format for the transmission of data across the networking medium, defining how data gets passed across it. WiFi (802.11) is a physical layer standard. |

| Layer 2 | Data link | Within a network segment, handles the physical addressing—the media access control (MAC) addressing of devices on the network and their communication. Ethernet and Point-to-Point Protocol are data link protocols. |

| Layer 3 | Network | Handles the logical addressing and routing of data, based on soft-defined addresses. Internet Protocol headers are the Layer 3 data in a packet. |

| Layer 4 | Transport | Protocol information, such as in the Transmission Control Protocol (TCP) and the User Datagram Protocol, provides for error-checking and recovery and flow control of data. |

| Layer 5 | Session | Handles communications between applications, such as remote procedure calls, inter-process communications like “named pipes,” and TCP secure sockets (SOCKS). |

| Layer 6 | Presentation or Syntax | Data formatting, serialization, compression and encryption services, like the Multipurpose Internet Mail Extension (MIME) format. |

| Layer 7 | Application | The data sent for specific applications in formats such as HTTP for the request and delivery of Web content, File Transfer Protocol (FTP), IMAP and SMTP mail connections, and other application-specific formats. |

Internet routers generally just look at Layer 3 data to determine which network path a packet gets relayed down to. Network firewalls look a little deeper into the data when making a decision about whether to let packets pass onto the networks they protect. Packet-filtering firewalls typically look at Layer 3 and Layer 4, checking what transport protocol (such as TCP or UDP) and which Internet Protocol port number they use (this is commonly associated with a specific application; port 80, for example, is usually associated with Web services).

Application-layer firewalls, which emerged in the 1990s, look still deeper into network traffic. These set rules for network traffic based on the specific type of application the data within the packet was for. Application firewalls were the first real “deep packet inspection” devices, checking the application protocols within the packets themselves, as well as searching for patterns or keywords in the data they contain.

Traffic cops vs. traffic spies

Where DPI devices sit in the network flow varies based on their purpose. DPI-based “stateful” firewalls briefly delay, or buffer, packets to check the traffic stream as it passes through. Other systems designed for deeper analysis of network content tend to passively collect packet data as it streams through a network chokepoint, then send instructions to the firewall and other security appliances when they find something amiss.

The advantage that in-line DPI systems have is that holding the packets in buffer allows them to handle the packets themselves before they’re sent on their way—intercepting their content, and repackaging it, “forging” packets with new data or removing data from within packet streams before it passes, altering data in flight. Spam-blocking firewalls, for example, use DPI to identify inbound e-mail message streams and check their headers and content for known spammers, viruses, phishing attacks, and other potentially harmful content. The firewall then reroutes those messages to quarantine or remove attachments entirely.

Web-filtering firewalls check outbound and inbound Web traffic for visits to sites that violate certain policies, or watch for Web-based malware attacks. Bivio’s Network Content Control System, for example, uses in-line DPI to allow network customers to set “parental controls” on their Internet traffic—evaluating the domains of websites as well as the content itself for adult or objectionable content within social networking sites and blogs. The network “pharms” attacks that use malicious DNS servers to hijack Web requests to another server (such as those attacked by the DNSChanger botnet).

Others go further, using their role at the edge of an enterprise network as a proxy for network clients to decrypting Secure Socket Layer (SSL) content in Web sessions, essentially executing a “man in the middle” attack on their users. Barracuda Networks, for example, recently introduced a new version of its firewall firmware that adds new social network monitoring features that can decrypt SSL traffic to Facebook and other social networking services, then check the content of traffic for policy violations (including playing Facebook games during work hours).

Companies want these capabilities for a variety of reasons that fall loosely under “security”—including compliance with “e-discovery” requirements and preventing confidential data loss. But those capabilities also can be used for more wide-ranging monitoring of network users. For example, 13 ofBlue Coat’s application firewalls were illegally transferred to Syria by way of a distributor in Dubai. The Web-filtering capabilities were allegedly used by the Syrian government to identify bloggers and Facebook users that expressed anti-government views within the country.

The privacy risks created by corporate use of these systems is significantly larger than that posed by government surveillance in the US, the EFF’s Eckersley said. “The systems that Barracuda and other companies are building are ripe for abuse. They have a small and debatable range of legitimate uses, and a large number of potentially illegitimate uses.” The ability to essentially run “man-in-the-middle” attacks on a large scale against employees and customers that these tools provide, he said, creates the risk of the data being abused by the company or IT staff.

DPI applications go far beyond simply enforcing policy. Once network operators started using DPI-based systems for security, other applications outside of security became possible as well. “The first one outside of the security market to use DPI was the (network) traffic management space,” said Bivio’s Amir. Companies such as Sandvine and Procera Networks built network traffic management systems that used DPI to improve overall network performance by giving priority to specific types of network traffic, performing “traffic shaping” or “packet shaping” to throttle bandwidth for some applications while giving priority to others.

“There’s no limit to the data you can extract from the payload,” said Amir. “But there’s a tradeoff of how much data you’re going to extract with how much storage capacity that’s going to take.”

“We can do a better job with network quality of service if the QOS is based on applications, and maybe subscribers, and use information that’s in the data flow already, but not if you just looked at IP addresses,” Amir explained.

Information discovered within the application data in packets also could be used by ISPs to do other things, such as targeted advertising. One failed DPI-based effort comes from a company called NebuAd. It tried to sell ISPs on this advertising idea, signing up Charter Communications and some smaller providers for a trial of a service that not only monitored the content of users’ Web traffic to target ads, but even injected data into packets, adding JavaScript that dropped tracking cookies into users browsers to do even more thorough behavior-based targeting of advertisements. NebuAd went bankrupt after it drew the attention of Congress, and Charter and the other ISPs in the trial dropped the “enhanced online advertising service” the company provided.

Other behavior-based marketing companies, such as Phorm, continue to offer “Web personalization” services that include discovery of users’ interests integrated with DPI-based Web security to block malicious sites. Another firm, Global File Registry, aims to go further, by injecting ISPs’ own advertisements into search-engine results through DPI and packet forging. The company has combined file-recognition technology from Kazaa with DPI to make it possible for ISPs to re-route links to pirated files online to sites offering to sell licensed versions of them.

Comcast has already tested the anti-piracy waters with DPI, running afoul of the FCC’s efforts to enforce network neutrality. The company’s ISP business, which uses Sandvine’s DPI technology, moved to block peer-to-peer file sharers using BitTorrent as part of its traffic management. The FCC ordered Comcast to stop (primarily because Comcast was injecting forged packets into network traffic to shut down BitTorrent sessions), but that order was later struck down by a Federal appeals court.

But these systems were designed for making quick decisions about traffic. And while they generally have reporting features that can give security managers and analysts insight into what traffic (and which user) has violated a particular policy, there’s a limit to how much information about that traffic they can capture effectively.

Drinking from the fire hose

On the other end of the spectrum is packet capture technology, which monitors the traffic passing through a network interface and records all of it to disk storage for forensic analysis. When analyzed with the right tools, packet capture tools such as the DeepSee appliances from Solera Networks can allow for security analysts to reconstruct the entirety of transactions between two systems across the Internet gateway at sustained rates of five gigabits per second and peaks in traffic up to 10 gigabits per second. That adds up to daily data captures of about 54 terabytes. Even at Solera’s advertised compression ration of 10:1 in its new Solera DB storage architecture, the cost of storing all that data, especially for larger networks, quickly adds up.

Packet capture is “valuable, but it’s limited,” Amir said. “You can’t record the whole Internet; you can’t record things in an unlimited fashion and expect to have anything meaningful to go back to. That’s a short-term solution for smaller networks. What if there was a breach that you discover three or four months later? How do you go back and see what happened on your network? That technology has not been developed until very recently.”

That technology is actually a synthesis of two. The first is DPI-based network monitoring systems that pre-process network data—capturing and storing not entire packets, but selective metadata from them and their aggregated application data such as e-mail attachments, instant messages, and social media posts.

“There’s no limit to the data you can extract from the payload, as long as you understand the payload,” said Amir. “But there’s a tradeoff of how much data you’re going to extract with how much storage capacity that’s going to take. If you go too deep, you’re sliding toward the packet capture realm. If you extract too little, you’re essentially back to IP logs which aren’t terribly useful.”

NarusInsight, Narus’ DPI-based network monitoring and capture tool, is designed to find a balance to that equation. It uses a network probe device called Intelligent Traffic Analyzer, which gets “tapped” into a network choke point. “There are usually six to 14 tap points in an enterprise network” belonging to customers of the scale Narus usually deals with, said Narus’ Harrington, “usually at the uplinks to the network backbone.”

Instead of grabbing everything that passes, the ITA watches for anomalies in traffic and aggregates packets into two kinds of “vectors” for each session: a human-readable transcript of all the packets in a particular connection, and an aggregation of all the application data that was sent in that session.

Narus’ ITAs support network taps of 100 megabits to 10 gigabits per second speeds in full duplex, meaning they could face traffic rates up to 20 gigabits per second. The amount of that data that can be captured and processed “all depends on processing that needs to be done,” Harrington said, and that depends on how many parameters (or “tag pairs”) the system is configured to detect.

“Typically with a 10 gigabit Ethernet interface, we would see a throughput rate of up to 12 gigabits per second with everything turned on. So out of the possible 20 gigabits, we see about 12. If we turn off tag pairs that we’re not interested in, we can make it more efficient.”

The data from the ITA is then sent using a proprietary messaging protocol to a collection of logic servers—virtual machines running in rackmounted Dell server hardware that further aggregate and process the data. A single Narus ITA can process the full contents of 1.5 gigabytes worth of packet data per second—5400 gigabytes per hour, or 129.6 terabytes per day per network tap. By the time the data is processed into aggregated results by the logic servers, petabytes of daily raw network traffic have been reduced down to gigabytes of tabular data and captured application data.

But as impressive as the analytical power of a NarusInsight environment is, there are still limits to the type of analysis that can be done by pattern matching in a small window of data. Unknown threats to security—”zero day” exploits for which there are no known signatures that evade statistical analysis by disguising themselves as legitimate network traffic—could slip by DPI tools by themselves. This can happen even if there are signs elsewhere in IT systems, such as server system logs and system auditing tools, that something is amiss.

For Narus, users, that typically means exporting the data out of NarusInsight’s analytical environment to another tool for forensics investigation and other deeper analysis. This could be a data warehouse or a “big data” analytical database like Palantir, Hadoop-based systems like Cloudera and Hortonworks, or Splunk. Narus recently announced a partnership with Teradata to provide for large-scale analytics of NarusInsight’s output, using Tableau’s visualization software and analytical SQL queries as a front end for analysts.

“We provide our customers with a starting kit, a common dashboard” for analysis, Harrington said. From there, they can summarize and aggregate the information from the various log data, which are stored in Teradata’s multidimensional data warehouse format. And, he added, Narus is working on a Hadoop-based analytical tool using MapReduce processes to dig even further into network traffic patterns.

But other players in the network security market are moving to put the analytical power of big data systems at the center of their network monitoring solutions, rather than as an add-on. Big-volume, high-speed data storage and management technologies like Hadoop grew out of the needs of “hyperscale” Web services such as Google. By harnessing this power, data analysis software from Splunk and LogRhythm or integrated solutions such as Bivio’s NetFalcon make it possible to throw much deeper analytical horsepower at DPI data and aggregate it with other sources, both in real time and as part of long-ranging forensic analysis.

Google-sized surveillance

NetFalcon launched as a product just over a year ago. It uses a columnar database format similar to Google’s BigTable and Teradata’s Aster database systems as its data store, and can perform both real-time and after-the-fact analysis on data picked up by its network probes. Each probe can handle up to 10 gigabits per second, and the “correlation engine” that takes in all of the inputs can pull in over 100 gigabits per second for processing. NetFalcon’s “retention server” database takes inputs not only from the system’s network probes, but also pulls in feeds from external log sources, Simple Network Management Protocol “trap” events, and other databases. It correlates all the traffic and event data for weeks or even months. “Hundreds of terabytes or petabytes of data, but laid out in such a way that you can do queries and searches very rapidly,” Amir said.

In an enterprise environment, Bivio could store months of data from these sources; in law enforcement applications, that data could scale to years. “We’re not storing the network data, we’re classifying it, categorizing it, breaking it up into its constituent pieces based on DPI, preprocessing it, and correlating it with external events,” Amir explained. The sources of information that could be pulled into NetFalcon’s database extend beyond the typical IT sources. “You could correlate info you’re getting over a mobile network along with geolocation data,” he continued. “Then when you’re doing the analytics, have the data right there and take advantage of it.” Some of the potential uses include correlating physical devices with online accounts to uncover individuals’ online identities, and establishing the connections between individuals by mapping their network interactions.

Splunk allows organizations to do the same sort of fused analysis, taking in data generated from an organizations’ existing DPI-powered systems and combining it with server logs or just about any other machine or human generated data that an organization would want to pull in. Splunk is designed to be able to process large quantities of raw ASCII data from nearly any source, applying MapReduce functions to the contents to extract fields from the raw data, index it, and perform analytical and statistical queries. Director of Marketing for Security and Compliance Mark Seward, described it to Ars as “Google meets Excel.”

Splunk can also distribute its flat-file databases across multiple file stores. The store for a particular application “can be a 10 terabyte flat file distributed across multiple offices around the globe,” Seward said. “When you search Splunk from a search head, it doesn’t care where the data is. It sees it all as virtual flat file.”

While Splunk is a general-purpose analytics system, there are enterprise security and forensics dashboards that have been prebuilt for it, and there’s an existing marketplace of analytics applications that can be put on top of the system to do different sorts of analysis. “We have a site called Splunkbase that has over 300 apps,” Seward said, “about 40 of which are security apps written by our engineers or by customers. A couple [apps] are integrations with Solera and NetWitness.” Even raw packet data can be dumped in ASCII into Splunk in real time and time-indexed, if someone wants to go to that level of detail.

The addition of log and other data from the network is essential to catching security problems caused by things like an employee bringing a device to work that has been infected by malware or otherwise been exploited, Seward said. “What security analysts are finding is that the security architecture of the enterprise gets bypassed when you have people bring their own device to work. Those can get spearphished, or get malware, and when they come in they can allow attacks in that bypass half the gear you have to detect intrusions. Malware does its thing behind your credentials.”

Having access to authentication data for users, and combining it with location information—such as when they’ve used an electronic key card to enter or leave a building, or when they log into various applications—allows systems like Splunk and NetFalcon to find a baseline pattern in people’s behavior and watch for unusual activities. “You have to think like a criminal,” Seward said, “and monitor for credentialed activities that, looked at in a time-indexed pattern, look odd.”

One reason why companies are increasingly interested in tools like NetFalcon and Splunk is for “data loss prevention”—blocking leaks of sensitive corporate data via e-mail, social media, and instant messaging, or the wholesale theft of data by hackers and malware using encrypted and anonymized channels.

“TOR is a good example,” Amir said. “Things like onion routers are sophisticated tools designed exactly to circumvent real-time mechanisms that would block that sort of traffic.” Analysts and administrators could search for traffic going to known onion router endpoints, and follow the trail within their own networks back to the originating systems.

Because these systems have a long memory, they’re able to catch patterns over longer periods of time and spot them instantly when they occur again, acting on them automatically. Both NetFalcon and Splunk are capable of launching automated responses to what gets discovered in data. In Splunk, the events are launched by continuous real-time searches of data as it’s streamed. NetFalcon’s “triggering” works in a similar way, as NetFalcon’s correlation engine processes incoming packet data, or when patterns are found when running an analytical query. Those actions could be sending configuration changes to a firewall, changing the settings on network capture devices, or sending an alert to an administrator about a problem.

Security on a budget

NetFalcon is targeted at very specific audiences: law enforcement agencies, telecom carriers and large ISPS, and very large companies in heavily regulated or secretive industries willing to pay for what amounts to an intelligence community grade solution. But for other organizations that already have application firewalls, intrusion detection systems or other DPI systems installed, there may not be a budget or need for Bivio’s type of technology. Take, for example, the University of Scranton, which uses Splunk to drive its information security operations.

Unlike NetFalcon, Splunk “is a huge database, but it doesn’t come with preconfigured alerts,” said Anthony Maszeroski, Information Security Manager at the University of Scranton (located in Scranton, Pennsylvania). The university has about 5,200 students—about half of whom live on campus—and has turned Splunk into the hub of its network security operations, using it to automate a large percentage of its responses to emerging threats.

Maszeroski said the IT department at Scranton pulls in data from a variety of systems. The campus’ wireless and wired routers send logs for Dynamic Host Configuration Protocol and Network Address Translation events to Splunk, which includes the physical MAC address of the devices connecting with a timestamp. This allows administrators to search the database by device address and follow where they’ve connected from on campus. The database also pulls in information on outbound DNS queries and other types of application traffic, enterprise system logs, and events from the University’s intrusion prevention system. The Splunk database of the University of Scranton Information Security Office is “close to a terabyte” in size, Maszeroski said, and “our standard op procedure is to throw everything away after 90 days. We’re also limited by budget and storage capacity.”

“Our advice is not to work for employers who demand to survey you in the office.”

One frequent activity that Splunk has helped the University automate is processing Digital Millennium Copyright Act takedown notices after a student is discovered hosting pirated content on sites hosted from their own computers or over BitTorrent streams. “We needed an automated, instant way of locking those down,” Maszeroski said. Data brought into Splunk can be used to perform a search for BitTorrent traffic and allows it to be identified by MAC address; the University’s information security office has built a Java application that uses Splunk’s Web API to find the offending MAC address and then “cut the person off at a switch or wireless level.”

DHCP data can be used to track down where offending devices are. And the DHCP log data allows the information security office to help the University’s public safety department look for stolen assets. When someone reports a stolen laptop or tablet, the office can do a quick search to see where it has been on the campus network and if it’s still connected.

Splunk’s dash also makes it easier to pick up on things that fall outside the norm. “We can do a statistical look at logs to see if an account is sending too much e-mail to check for compromised Web mail accounts,” Maszeroski said. “Also, it’s very unusual for someone to be logging into our Web server from Nigeria. We can look for multiple usernames logging in from one IP address, or look for one logging in from different geographic areas.” The same goes for the University’s VPNs.

“If there’s an event we’re absolutely certain is an indication of badness, we can programmatically run a script within a minute to cut off IP address at our network perimeter.”

Absolute power

Yes, these capabilities make it possible for organizations to both prevent security breaches and track down the reasons for the ones that slip by. But the ability to survey almost any kind of network traffic and combine it in real-time with location-based data (plus other physical world information) then store it indefinitely is a huge privacy concern. Even without logging on, individuals can leave patterns identifying themselves in their digital footprints that could be used by others for less-than-ethical purposes, said EFF’s Eckersley.

“If you’re in the habit of loading a few particular blogs,” Eckersley said, “that pattern will be repeated whether you’re in the office or at home. If networks end up with extensively deployed pattern recognition systems, users are going to need very strong assurances that the data isn’t being kept. And it’s going to be difficult for companies to give that sort of assurance, because the tendency is to keep everything. Our advice is not to work for employers who demand to survey you in the office.”

And companies in some parts of the world, including ISPs, may soon find themselves being asked to keep everything. In the UK, for example, a proposed law announced in the Queen’s Speech in Aprilwould require ISPs and others to retain metadata obtained from deep packet inspection for digital communications—e-mails, text messages, instant messages and webpage visits, among other things—for up to a year.

In the US, Senator Joe Lieberman’s Cybersecurity Act of 2012 would have pushed for larger use of systems like NetFalcon and other DPI-based systems that provide “continuous monitoring” within government. It would have explicitly given private network operators the go-ahead “notwithstanding the… Foreign Intelligence Surveillance Act of 1978… and the Communications Act of 1934” to survey their networks and share information collected that might have some bearing on cybersecurity with the Department of Homeland Security and other agencies. The bill was filibustered by Republicans because of regulations it put on industry, but parts of the bill may be pushed forward by the Obama administration as part of an executive order.

Perhaps the proliferation of such surveillance is inevitable—it is what allowed the Olympics to proceed without any major incident, after all. And certainly, the use of big data analytics would be an improvement on some of the electronic intelligence systems currently used by US agencies, considering the recent revelations about the sad state of the FBI’s management of surveillance data. But the fact remains that these systems, as automated as they are, are only as good as the people who use them—both in terms of performance and privacy.